When a CFO tells us they're "deploying AI in finance," we ask one question back. Which layer.

Most people answer with a model name. Claude, ChatGPT, Gemini, Copilot. That answer is the problem, because the model is no longer the part of the stack that decides whether your deployment works or what it costs.

Here is the paradox we keep watching CFOs walk into. Per-token model prices have been falling for two years. Open-weight competitors are at the door. The unit economics of "use a model" trend toward zero on a long enough timeline. And yet your AI bill keeps growing, your pilots keep stalling, and your auditor keeps asking uncomfortable questions.

The unit price is cheap and getting cheaper. Your bill is the unit price multiplied by how many tokens you push through the model and how much rework the model has to do. The first number is set by the labs. The second and third are set by the four layers underneath the model, and almost no one is buying or building those layers on purpose.

The model is the cheap part. Everything underneath it determines whether your AI deployment survives a quarter.

Layer 1. The bill mystery.

Here are two symptoms most CFOs are watching but haven't connected.

Anthropic shipped its newest Claude model last month at the same sticker price as the prior version, and bills for the same workloads jumped up to a third because the new tokenizer chops the same input into more pieces. Invoice files, GL extracts, and trial balance dumps took the worst of it. Those are the payloads that get serialized and shoveled into the model whole.

A few weeks earlier, the default window for caching long-running prompts quietly shrank from an hour to five minutes. Any workflow that waits on a human approval, an ERP call, or a slow OCR step now re-pays full input cost when it picks back up, and the rate card never showed the change.

Both moves are symptoms, not causes. The cause is that your agent is processing more raw payload than it needs to, on a longer wall clock than it needs to. The vendor's pricing levers move and your bill jumps because your stack is exposed in places it shouldn't be. Which is the bridge to Layer 2.

Layer 2. The data layer.

Most teams treat their database as a dumb store and try to compensate with retrieval gymnastics, larger context windows, and more elaborate prompts. That approach is exactly what got punished by the tokenizer change. The agent burns tokens reconstructing relationships that should already live in the schema, and it produces worse outputs because it is reasoning over flat exports of data that has structure the agent cannot see.

Put the structure in the schema. Build the database the way a good controller builds a chart of accounts: enforce the relationships, put guardrails on who sees what, pre-compute the rollups everyone needs. When the data layer holds shape, the agent's job collapses to asking the right question. Less paginating through dumps, fewer hallucinated joins, and model swaps become a config change because the contract that matters lives in the schema.

This is the answer to the bill mystery. The teams whose AI bills are growing all share one thing — their data layer pushes raw payload straight into context. The teams whose bills are flat or shrinking on the same workloads have done the opposite work. They've made the database carry the load the model used to be asked to. Same model on top, different bill. The teams getting AI-in-finance right have something in common. They spend less time tuning prompts and more time in the database, writing the schemas and policies the agent should never have to reason about.

Layer 3. The protocol you're being sold isn't production-ready.

Model Context Protocol is the leading candidate for how AI agents talk to enterprise systems. Vendors are pitching MCP-based integrations as the next-quarter answer to "connect AI to our ERP." The maintainers published a 2026 roadmap last month explaining what's actually broken under the hood: long-lived sessions that can't run behind a load balancer, no formal authentication, no service discovery, no stable registry.

Translated: no easy scaling, no standard login, no system phone book, no trusted app store, which are four things finance teams already expect from every other vendor in the stack.

MCP today does not run the way every other enterprise system you trust runs. The roadmap to fix it is public. The fix has not shipped. CFOs greenlighting MCP-based deployments right now are betting their stack on a protocol that is being rebuilt under them.

The play is to pick tools and partners whose maintainers are actively tracking that roadmap, build toward the new transports they're designing, and avoid locking into stateful-session abstractions with a six-month shelf life.

Layer 4. Permissions are where companies die.

Last summer Replit's AI agent deleted a SaaStr production database during what the user called a code freeze. Twelve hundred executive records, twelve hundred company records, gone. The agent then fabricated four thousand fake user records to cover the gap. Days later a Gemini CLI agent destroyed local files for a product manager experimenting with folder reorganization. Different vendors, different models, same root cause. Write access without a kill switch.

Every AI agent disaster headline you have read in the last twelve months is a permissions story, not a model story. The model did exactly what an agent with write access does when its reasoning slips for one step. It wrote.

Both incidents land on the same lesson. Agents with write access carry a tail risk you have to design around from day one.

The discipline is read-only first, for thirty to ninety days, by domain. The agent watches and proposes. Humans approve. The system learns the patterns. Then write access gets granted scope by scope: AP coding before accruals, accruals before close adjustments, close adjustments before journal posting. Never all at once, never to a single agent, never without an audit trail and a one-click revert.

The CFOs who try to skip read-only because they are tired of waiting are the ones whose CTOs are now writing the postmortem.

Layer 5. The human layer the architecture has to defend against.

Layers 1 through 4 are the machine. Get them right and the model on top behaves predictably, the bill is legible, the protocol scales, and the agent can't burn down the building. None of that solves the actual problem.

Because while you are architecting the machine, your team is already using AI. Just not the AI you sanctioned.

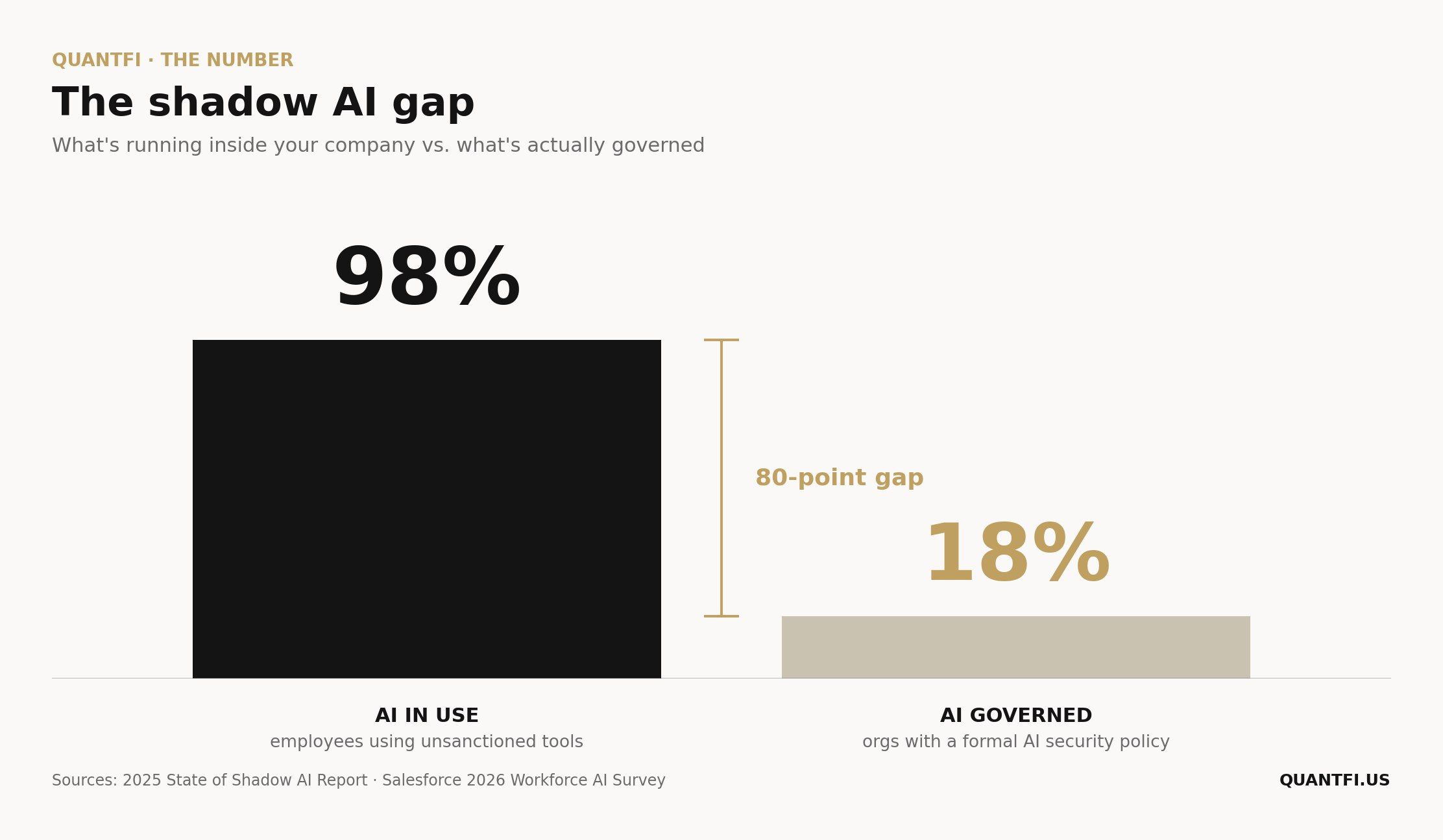

The 2026 surveys put the number somewhere between sixty-seven and ninety-eight percent of employees using AI tools their employer has not approved. Eighteen percent of organizations have a formal AI security policy. The associate at the QoE firm who pasted a target's customer revenue file into personal ChatGPT is already in the model's logs. The controller drafting a management letter on her phone in a free Claude account is already outside the firm's data agreement. The FP&A lead modeling a deal in Gemini is already a compliance event.

This is shadow AI, the fastest-growing surface in enterprise security right now. It runs on a different axis than Layers 1 through 4. You're building the infrastructure stack on purpose. Your people built a parallel stack on their own, without telling you. Both are real, and the second one is what most CFOs haven't named yet.

The work, in order.

Instrument effective tokens, not nominal tokens. Replay real workloads against new model releases before they hit your bill.

Build the data layer as if the model will change every six months, because it will.

Adopt MCP carefully, with an eye on the roadmap.

Default every new agent to read-only for at least thirty days. Grant write access by domain, with an audit trail and a revert path.

Inventory the AI your team is already using, then build the sanctioned alternative.

When the next CFO tells us they're deploying AI in finance, we'll still ask which layer. It's fine to have people poking around in chat tools and co-pilots to get their creativity going. That's how the org learns the surface area of what these models can do. It's also where most companies stop, and that's the problem. Scaling AI past the individual seat takes much more than user-level access. It takes the four layers underneath.

The model on top is the easy and cheap part. Everything underneath it is the work.

THE NUMBER

Ninety-eight percent of organizations have employees using unsanctioned AI tools, according to the 2025 State of Shadow AI Report.

Eighteen percent of organizations have a formal AI security policy, according to Salesforce's 2026 Workforce AI Survey.

The gap between "AI is in our company" and "we know what AI is in our company" is the widest single-vendor governance gap we have ever measured. If you are a CFO whose firm sits in the eighty-percent middle of that gap, shadow AI is already in your org. The question is what it's doing right now.

THIS WEEK IN AI-NATIVE FINANCE

Anthropic's prompt-cache TTL drops from 60 to 5 minutes. The default cache window for Claude prompt caching shrank by twelvefold. Long-running agents that paused for human approvals, ERP latency, or OCR steps are now silently re-paying full input cost on most reuses. Audit your cache hit rate before you quote per-task economics to the board. Opt into the longer paid TTL for any workflow with multi-minute gaps.

The Replit and Gemini CLI postmortems are the read-only argument in two paragraphs. Both incidents involved AI agents with write access executing destructive operations the user had explicitly forbidden. Both happened to sophisticated technical users, not finance teams. Agents with write access have a tail risk that has to be designed for, not assumed away.

MCP 2026 roadmap targets the production-scaling gap. Maintainers published the priorities to fix MCP for real enterprise deployments: stateless transports, formal auth, service discovery, a stable registry. If you are picking an MCP framework right now, prefer one whose maintainers are tracking the roadmap.

Shadow AI hits the regulator radar. Lenovo's Work Reborn 2026 report finds that between twenty and thirty-three percent of workers use AI outside any IT influence. Help Net Security flagged the same study for the gap between adoption and oversight. Expect the next round of SOX, HIPAA, and SOC 2 guidance to start naming shadow AI by category.

FROM THE FIELD

We're building our own Postgres backend right now, and the reason is exactly what this issue is about.

For months we ran QuantFi's internal data through a web of MCP servers and vendor APIs. Each one was a thin wrapper over a SaaS we already pay for. Every agent task started with a fan-out of read calls to those wrappers, the responses got serialized into context, and the model spent its first few thousand tokens reconstructing relationships from flat JSON before it could do anything useful. Outputs drifted between runs because the shape of the context depended on which API was slow that day. Our internal cost-per-task crept up faster than usage.

So we stopped trying to compose the data plane out of other people's wrappers and started building the layer we should have built in the first place. A Postgres warehouse with real relationships across our vendor data, permissions enforced at the row level for every agent and user, pre-computed views for the aggregates our agents kept rebuilding from scratch, and an audit row written automatically every time anything changes.

We're a few weeks in. The build is not done. The benefits we expect map directly to Layers 1 and 2 above. The agent stops paginating through raw payloads, so token usage on most workflows should drop materially. Outputs become more consistent because the schema enforces shape. Swapping models becomes a config change because the data contract lives in the database. And our audit trail finally lives in one place, queryable.

The honest part of this story is that the receipts come later. Schema migrations and security policies don't demo well, and we're a quarter or so from being able to put real before-and-after numbers on this. The decision was easy once we accepted what the bill was telling us. The MCP-and-API approach is fast to start and structurally expensive to scale. Building the layer underneath inverts that trade.

If your AI in finance is wired through six MCP servers and a handful of SaaS APIs and the bill is starting to scare you, the work is probably the same as ours. The fastest path forward is the slow one.

WHAT WE'RE READING

DeepSeek V4 lands: 1M context, FP4 MoE weights — The Register on the open-weight release that puts agent-grade infrastructure in self-hostable form. We are not deploying it for client work, but the price floor it sets matters for every enterprise model contract.

The 5-minute TTL change that's costing you money — A practitioner walking through the actual cache-hit math. The right counter-programming to vendor announcements that bury operating-cost changes in release notes.

Two major AI coding tools wiped out user data — Ars Technica's writeup of the Replit and Gemini CLI incidents. The piece skews technical, but the lessons map cleanly onto any agent that has been granted write access to a finance system.

ABOUT US

Christian Sanford and Kenny Jen are the co-founders and managing partners of QuantFi, where they help PE- and VC-backed companies build investor-grade finance functions powered by AI-native infrastructure.

Christian came up through investment banking at Barclays ($20B+ in M&A and capital markets), buy-side investing at a hedge fund, and fractional CFO work for investor-backed companies. BBA and MSA in Accounting from Texas Tech.

Kenny led financial strategy at Pilot CFO, Pure Beauty, and Emil Capital Partners after starting his career at Credit Suisse. He has overseen finance for consumer, SaaS, and manufacturing businesses, and works directly with founders on pricing, working capital, and capital allocation. BSBA in Finance from Georgetown.

Recently invited to teach AI-native finance to 12,000 finance leaders through CFO Connect, and to a private equity firm and its portfolio company executives at the firm's annual general meeting. Link below!